Google said on Monday it would make it mandatory for advertisers to disclose election ads that use digitally altered content to depict real or realistic-looking people or events, its latest step to battle election misinformation. The update to the disclosure requirements under the political content policy requires marketers to select a checkbox in the “altered or synthetic content” section of their campaign settings.

The rapid growth of generative AI, which can create text, images and video in seconds in response to prompts, has raised concerns about its potential misuse. The rise of deepfakes, convincingly manipulated content to misrepresent someone, have further blurred the lines between the real and the fake. Google said it will generate an in-ad disclosure for feeds and shorts on mobile phones and in-streams on computers and television. For other formats, advertisers will be required to provide a noticeable “prominent disclosure” for users. The “acceptable disclosure language” will vary according to the context of the ad, Google said.

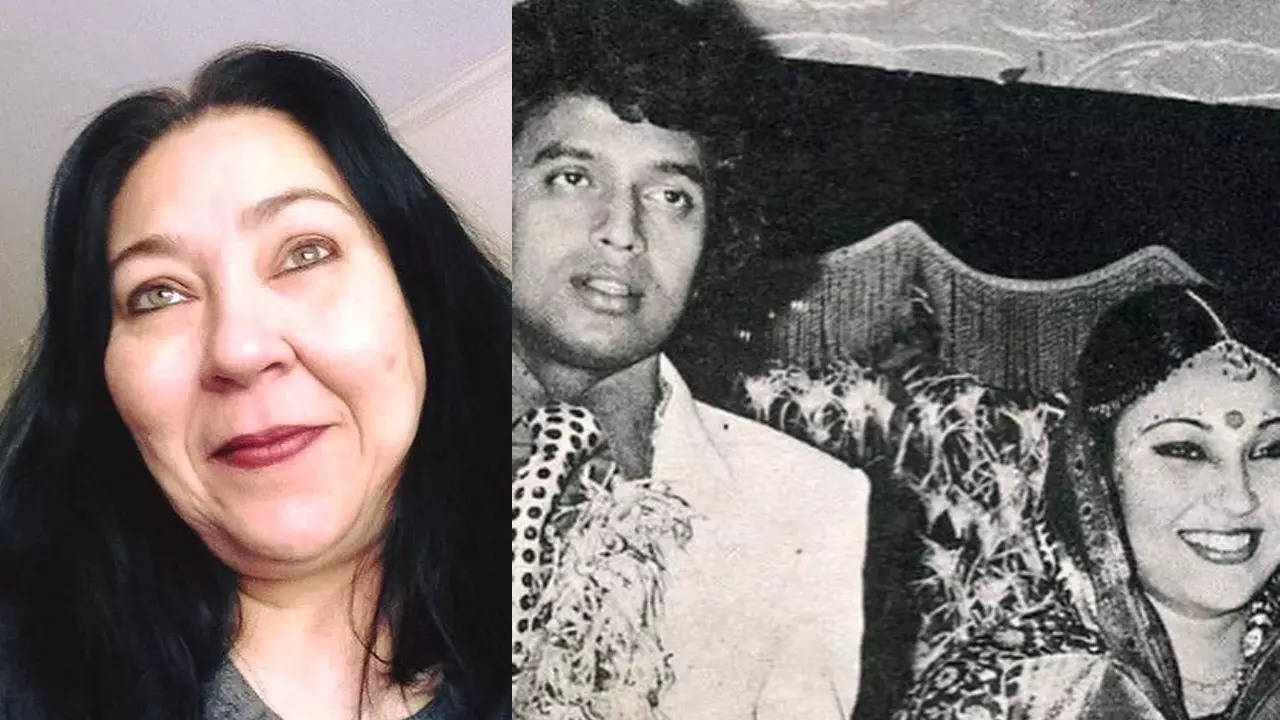

In April, during the ongoing general election in India, fake videos of two Bollywood actors that were seen criticizing Prime Minister Narendra Modi had gone viral online. Both AI-generated videos asked people to vote for the opposition Congress party. Separately, Sam Altman-led OpenAI said in May that it had disrupted five covert influence operations that sought to use its AI models for “deceptive activity” across the internet, in an “attempt to manipulate public opinion or influence political outcomes.” Meta Platforms had said last year that it would make advertisers disclose if AI or other digital tools are being used to alter or create political, social or election-related advertisements on Facebook and Instagram.